Share this post

In our summer series on artificial intelligence, we take a look at the major issues surrounding AI: what is it? How have different sectors been transformed by its emergence? What are the advantages and dangers for cybersecurity?

In recent decades, fiction has been the main medium through which the public has been introduced to the figure of artificial intelligence: the replicants in Blade Runner, the droids in Star Wars or HAL in 2001 – Space Odyssey have left their mark on people’s minds, but few imagined that they could one day become reality. Thanks to progress made in deep learning and natural language processing, AI is no longer a futuristic dream, but a contemporary reality. But how did we get here? That’s what we’re going to find out in this first part of our summer series.

The aim of AI is to create algorithms or methods that enable computers to learn by themselves from data, experience and interactions with the world around them. This is how human intelligence behaves: we acquire knowledge and learn to understand by observing the world around us, by trial and error, by reflecting on our experiences. By interacting with the world, we are constantly receiving new information and using it to improve our understanding of the world.

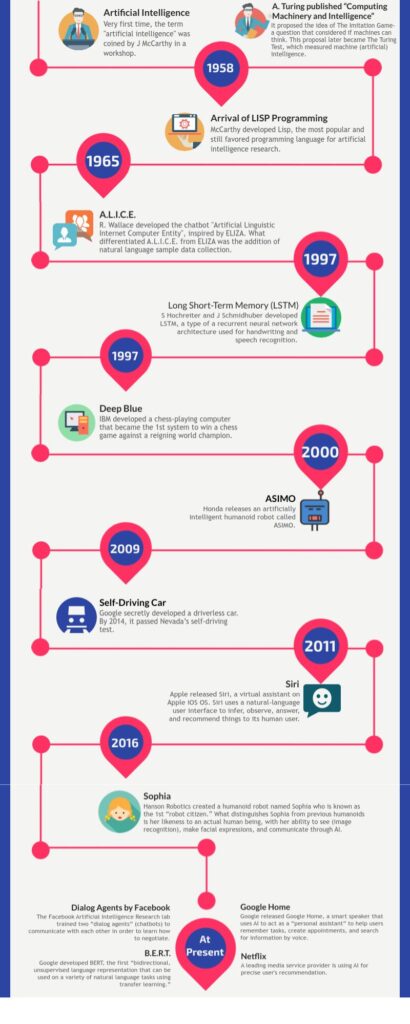

In the 1950s, the mathematician Alan Turing invented a test of artificial intelligence that was supposed to answer the question “can machines think”: if a human has a conversation with two entities, a human and a machine, and is unable to determine which of the interlocutors is the human and which the machine, then the machine would pass the test.

In the 2010s, a number of machines came close to passing the test, although there is no consensus on the exact number, or even on whether the test was actually passed. The AI Eugene Goostman, which persuaded 30 humans that it was a 13-year-old Ukrainian boy through text conversations lasting around 5 minutes, is considered to have come closest in 2014.

The term “artificial intelligence” was first used by John McCarthy in 1956 at the Dartmouth Conference, whose aim was to study intelligence in detail, so that a machine could be built to simulate it.

In the 1960s and 1970s, AI research focused on the development of expert systems designed to mimic the decisions made by human specialists in specific fields. These methods were frequently used in sectors such as engineering, finance and medicine.

In the 1980s, research programs began to take an interest in machine learning, and were able to solve algebra problems formulated in a verbal, non-numerical way; this is when language was first used by AIs – eventually leading to the GPT Chat we know today. Neural networks were created and modeled on the structure and functioning of the human brain.

The 1990s saw a shift in focus towards machine learning and data-driven approaches, thanks to the increased availability of digital data and progress in computing power. This period saw the rise of neural networks and the development of support vector machines, which enabled AI systems to learn from data, resulting in improved performance and adaptability. It was also at this time that natural language began to be simulated in a particularly realistic way, notably by the STUDENT programs or ELIZA – the very first chatbot.

Things have really accelerated over the last 20 years. In the early 2000s, progress in speech recognition, image recognition and natural language processing were made possible by the advent of deep learning, a branch of machine learning that uses deep neural networks.

Finally, in the 2010s, AI became a genuinely visible part of our daily lives in the form of smartphones, virtual assistants, chatbots present on many commercial sites, right up to the GPT revolution.

The recent AI revolution is largely attributed to the development of deep learning techniques and the emergence of large-scale neural networks, such as OpenAI’s Generative Pre-trained Transformer (GPT) series.

In 2015 Open AI was co-founded by Elon Musk, Sam Altman , Greg Brockman , Ilya Sutskever, John Schulman and Wojciech Zaremba. The founders were aware of both the potential and the risk of AI, and wanted to promote these technologies in a way that would preserve safety.

The nonprofit became a company in 2019 and signed a partnership with Microsoft in 2023: “Today, we’re announcing the third phase of our long-term partnership with OpenAI through a multi-year, multi-billion dollar investment aimed at accelerating AI breakthroughs to ensure these benefits are widely shared with the world.” (GPT statement – 2023)

If for the moment it’s still easy to spot weaknesses in current AIs, the exponential speed of their development leads us to believe that we’re on the cusp of a new revolution in technology, and hence in the world, due to the changes these AIs are bringing to our daily lives, but also to a growing number of professions. This article was not written using GPT chat… but could it have been? Almost certainly, just like the code on this web page.

In the rest of our series, we’ll be looking at how different industries are evolving as a result of the introduction of these AIs, and what the repercussions – both positive and negative – might be in terms of cybersecurity.

In the meantime, don’t hesitate to contact us if you have content to protect, and come back in August for the continuation of our series on artificial intelligence.

Share this post