In early 2024, social networks were flooded with fake pornographic images of Taylor Swift. One of these images was viewed almost 50 million times on Twitter/X. It’s not the first time such a scandal has occurred, but the immense popularity of the singer – crowned Personality of the Year 2023 by Times magazine – has forced politicians to speak out, and call for legislation on the subject. Deepfakes are currently insufficiently regulated. What are they, and why are they problematic ?

Deepfakes come from “deep learning” and “fake”. They are synthetic media that have been digitally manipulated to convincingly replace the representation (photo, video) of one person with that of another. They can also be computer-generated images of human subjects that do not exist in real life.

Deepfakes originated in 2017 when a Reddit user posted manipulated pornographic clips on the site. In these videos, the faces of celebrities and pornographic film actors were interchanged.

Today, many deepfakes are still pornographic.

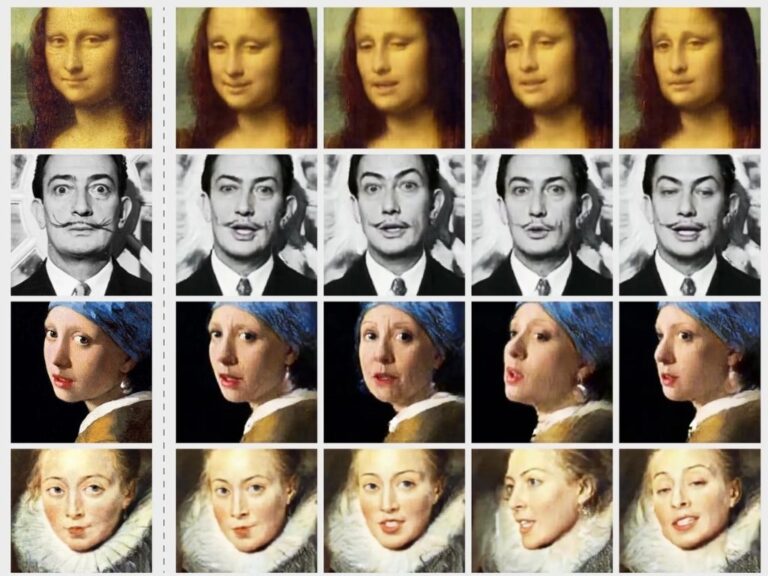

First, thousands of photos of the two people’s faces are run through an artificial intelligence encoding algorithm. The encoder finds and learns the similarities between the two faces and reduces them to their common features, while compressing the images. A second AI algorithm, the decoder, then learns to recover the faces from the compressed images. Two decoders are used: one retrieves the face of person A, the other that of person B. The compressed images of person A are then fed into the decoder trained to recognize the face of person B; the latter then reconstructs the face of person B, using the expressions and attitudes of face 1.

For a convincing video, this operation must be carried out on every image, but new techniques now enable unskilled people to produce deepfakes with just a few photos. There are now free tools available online for anyone to simulate these steps very easily, such as the https://deepfakesweb.com/ website.

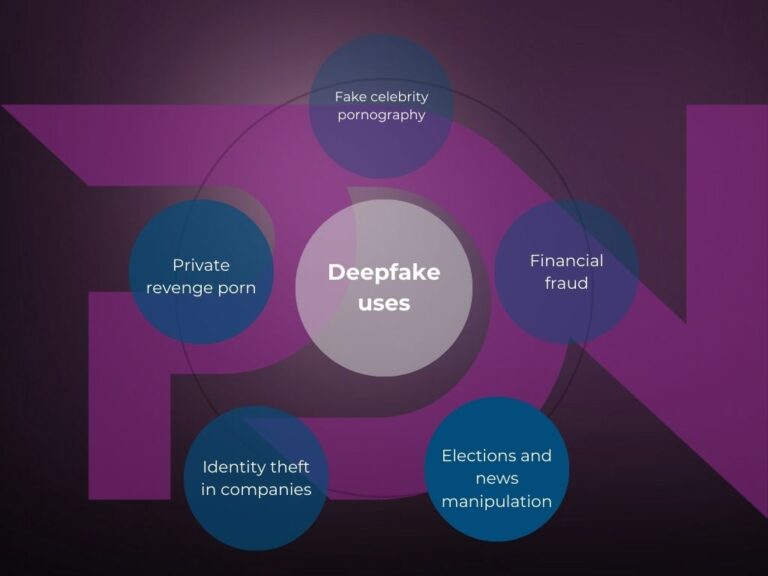

Manipulation, disinformation, defamation or humiliation, discredited personalities (mostly female stars) are at the forefront. Private revenge porn, now that the technology has become much more accessible, is also one of the most frequent uses of this technology.

In a study carried out in September 2019, artificial intelligence company Deeptrace actually found 15,000 deepfake videos (twice as many as nine months previously). Over 96% of this content was pornographic, and 99% of them matched female celebrity faces with porn stars. Inadequate moderation on many social networks, and particularly on Twitter / X, was repeatedly highlighted. Politics are also particularly targeted: fake images compromising high-profile personalities are generated, and fake phone calls from Joe Biden were recently made, with the aim of discouraging voters from casting their ballots in the forthcoming presidential election. False information about ongoing armed conflicts is also created in this way. Entire disinformation campaigns can thus be created in just a few clicks, by virtually anyone, even with limited technical skills and at low cost.

But this technique is now also creating major security problems in companies: recently, several employees of major companies have been tricked, using phones reproducing the voices of executives, and even a fake meeting on Zoom, into making transfers of tens of millions of dollars on the fictitious orders of hierarchical superiors authorized to request such operations. A VMWare report revealed a 13% increase in deepfake attacks last year, with 66% of cybersecurity professionals saying they had witnessed them in the past year. Many of these attacks are carried out by e-mail (78%), which is linked to the increase in Business Email Compromise (BEC) attacks. This is a method used by attackers to access corporate e-mail and impersonate the account owner in order to infiltrate the company, the user or partners. According to the FBI, BEC attacks cost businesses $43.3 billion in just five years, from 2016 to 2021. Platforms such as third-party meetings (31%) and business cooperation software (27%) are increasingly used for BEC, with the IT industry being the main target of deepfake attacks (47%), followed by finance (22%) and telecommunications (13%). It is therefore essential for companies to remain vigilant, and implement deepfake detection technology and robust security measures to protect themselves and their organizations against this type of attack.

Identity theft through deepfakes has thus become a worrying problem for corporate security. What’s more, as technology rapidly evolves, these false images, voices and videos are becoming increasingly difficult to identify. The problem has now moved well beyond the private sphere, and can no longer be ignored by governments or businesses alike.

In our next article, we’ll look at what solutions can be adopted to fight this phenomenon. In the meantime, if you have a film, series, software or e-book to protect, don’t hesitate to call on our services by contacting one of our account managers; PDN has been a pioneer in cybersecurity and anti-piracy for over ten years, and we’re bound to have a solution to help you. Enjoy your reading, and see you soon!

Share this article